by Scott Jehl

An Event Apart Online Together – Human Centered Design

1:30pm Central

Performance is PART of Accessibility – it’s a subset. When you optimize performance, you optimize accessibility too.

In that phrase you can think accessibility in 2 ways:

- Improving performance makes a site more accessible to more people. Example: Chris Zacharias @ YouTube: The YouTube team was experimenting with their performance in the past and created a version of it called Feather. As they watched the avg page loading time they noticed that it was actually slower on this ‘lightweight’ version of the site than the normal version. What was happening is that people with slow internet connections who normally couldn’t USE the YouTube site were able to use this Feather version, so their avg page load time was longer than an avg user… but that wasn’t the only thing that matters because it meant they were able to USE the service. Better performance was able to allow new people to use the product.

- Optimizing performance also makes a site more Accessible (as in, ensuring a site is usable and meaningful specifically for folks who are relying on assistive technology). One example of assistive technology are screen readers. A brail reader seen here is another example. There are a number of steps in a page loading process to expose a web page’s content from a browser to the assistive technology. Even in a page that’s optimized to render quickly on a screen that can take longer than you would think.

Devices on average world wide are slower than the ones we carry in our pockets. Performance optimizes access.

He likes to think about optimizing accessibility broadly – to optimize access for EVERYONE, and also

Generally, speeding up performance should shorten the time for everyone to access a website. Unfortunately, when we optimize performance today we CAN run the risk of un-optimizing accessibility, or optimizing performance at the EXPENSE of accessibility. This has to do with bias and the metrics we prioritize in the performance circle.

We have known for a long time we wanted to reduce the time it takes to load a website, and for a while now we’ve focused on perceived performance (which has to do with when the user starts to see the page loading aka ‘first paint’).

Tool: Lighthouse – Chrome Dev Tools: It does a fantastic job of analyzing how a page loads. It shows lots of metrics for ‘perceived’ performance. Google Page Speed Insights also runs lighthouse.

As we aim to improve these perceived metrics an unintentional thing has been happening – we’ve championed ways to make a page APPEAR useable before it actually is. This optimization technique also has a potentially enabling effect for bad practices which brings us back to this bias – what is the perceived performance of this experience for users with assistive technology?

Often, the early versions of a page aren’t yet usable to all users, not until all interactivity is available. Screen readers rely not just on how a page appears but how it communicates. For example, a switch on a page may load initially as just a DIV and then later on when Javascript loads it becomes an interactive switch. Not until the interactive functionality is available does a screen reader see that switch as a switch – until then it’s just a text label.

Custom interactions like a switch control involve a little effort to expose meaning from what otherwise is just a DIV. Once the attributes are present, a screen reader can then describe what it is and allow the user to interact with it.

Javascript based controls are made meaningful sometimes long after they are loaded on a page. The cost of using custom scripted elements over say a radio input it considerable.

Unfortunately, performance has been getting worse. When cell networks first arrived they were slow, and we couldn’t afford to send huge files over the networks because it just kept too long to download them. Now, we see cell networks that sometimes exceed the speed of our home wi-fi connections. 5G’s download and upload speeds are very fast – but it also improves latency so it will turn around that code faster and in bulk.

IN THEORY, that increased speed should help our web performance problems. But web performance is often getting worst for the same users and the reason is that the average device being used around the world to access the internet is much much slower to parse code/files than the devices we are used to using. These are average low powered android devices that are a year or two off the market. You can look at the best sellers of unlocked phones on amazon. This is a good page to keep track of because it tells you what people are ACTUALLY buying around the world.

These phones are connected to the same networks but they’re slow to process the files they download. Also, during the parsing of javascript the phone would actually be locked up until it’s done.

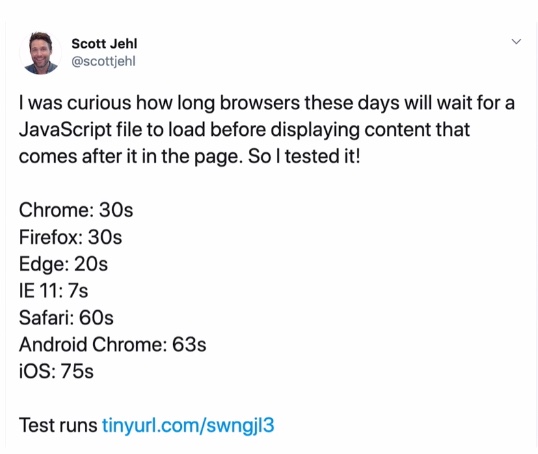

We send over 370kb of JS to the average device. It takes over 6s to parse 200kb of Javascript code downloaded onto one of these low powered average phones (on top of the download time).

We have to be mindful of the load step where the page is visible but not usable yet.

We are shifting the burden from the network to the device.

Faster networks have allowed us to deliver increasing amounts of code and we have used that poorly.

How to do better?

- We should be optimizing our web delivery files so they can travel faster over the network.

- For image and video files, we want to use tools to optimize the physical file

- For fonts, we want to optimize the characters in the font so it doesn’t carry the fonts we don’t need

- For CSS, JS, SVG, we should remove unnecessary whitespace and compress files with something like GZIP

File weight isn’t everything when it comes to perceived performance.

Just by prioritizing the way the page was delivered, without changing the size of the site, he was able to cut 9s off the time for when the site started to be visually rendered.

METRICS

Time to First Byte (TTFB)

The time between clicking and link and the first bit of content coming to the browser.

When you click a link, your browser needs to find the server and request a file. This time is spent on the network and the web server.

- Speeding up TTFB: Distribute files around the world on a CDN (ex: Cloudflare, Netlify, WP Engine’s built-in CDN)

- Minimize redirects as much as possible (reduce non-essential redirects)

- Cut down on server work time by doing less dynamic database API work (the fastest response is when the server can just read and turn around a static file).

First Contentful Pain (FP)

The first time pixels start to become visible to the user.

When you first make a request to fetch a website the server will respond with some HTML which streams to the browser. The browser can render parts of the page before it streams even before it’s fully loaded. HTML is special like this. To start rendering the page visually however it needs to be FREE to do that. It will stop rendering if it encounters a reference to an external file it needs to fetch before it can proceed.

References to external CSS and JS files will pause the load of the visible page. Even at the speed of light, that time ends up. Hopefully the files are at least on the same server – if not, there will be even more delays as the browser will need to make and establish connections to third party servers. Keeping files on the same domain is very helpful.

This is potentially a very big single point of failure. When things are working as expected these delays are still very common.

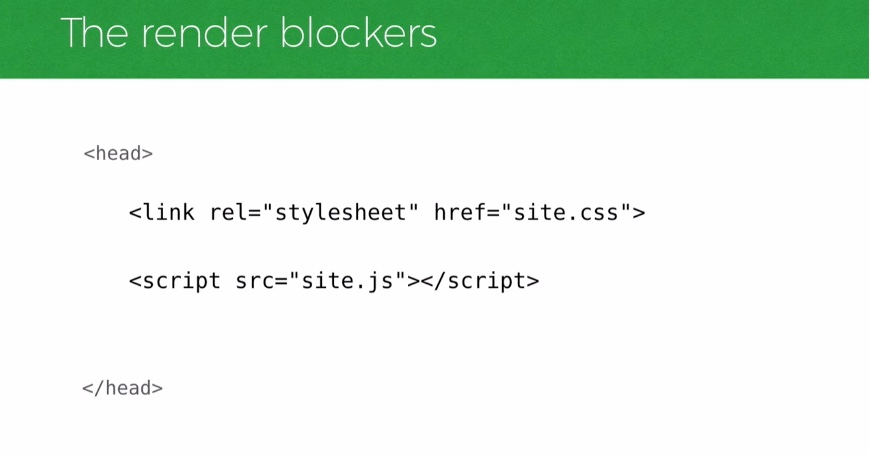

RENDER BLOCKERS

The heavy videos and images aren’t what is preventing your page from loading, it’s these LINKS and SCRIPT files that are in the way of the rendering path.

We need to DEFER them if they’re not critical to the initial rendering of the page.

For JS, it’s pretty straight forward and more common. The async and defer attributes will help unblock rendering.

Deferring JS is one of the absolute most important things you can do to reduce time to First Paint.

CSS is less straight forward:

What if you want to let your CSS load without blocking the page? It’s not as intuitive way as it is with JS. There is no async or defer attribute for CSS. Rendering stylesheets do block page rendering. There are attributes that prevent that, like media=“print” for example. It’s great that these types of stylesheets load in the background rather than blocking the page because there’s no sense in blocking

We can take advantage of this asynchronous loading behavior by mixing the attributes in a clever way – to do this we can set our media attribute to PRINT (which causes that stylesheet to load in the background and let the page render while that stylesheet is loading) and then we can add an ONLOAD attribute, and once the page loads set the media attribute back to ‘all’ and that will cause the stylesheet to actually apply to the page.

By doing this we’re reproduces the same behavior as an asynchronous load.

BUT, it’s rare that we want this behavior with CSS, because the page would be unsettled and create a ‘flash of unsettled content’ and it’s not something that we want. It’s actually horrible for usability because it’s very disorienting.

So SOME of your CSS needs to be rendered on page load.

We want to start thinking about page delivery like ‘what do I need to bring with me’?

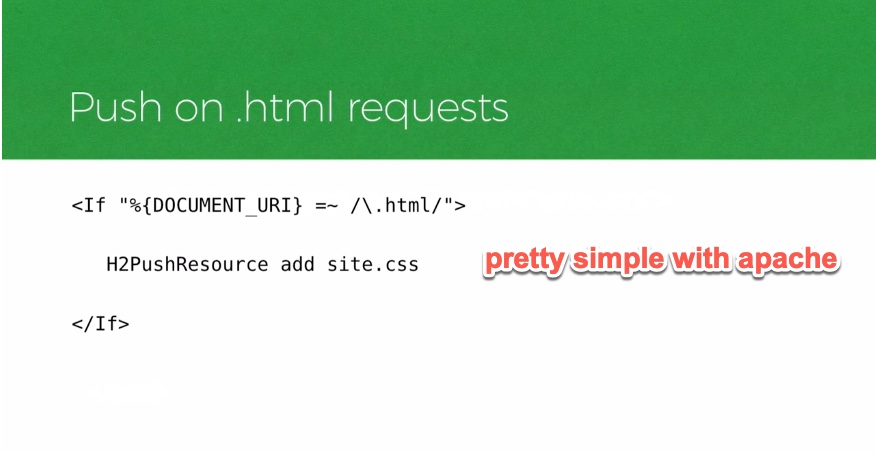

- Server Push – a new technology that comes with HTTP2. When the browser requests a particular file, the server can decide to send that file AND another file you ask it to. This is helpful and can save a lot of time for blocking requests and not having to make trips back to the server. The HTML receiving that file doesn’t need to do anything special.

- Push support on web servers does vary widely. If you’re running apache, setting up a server push is pretty painless.

- Push is helpful to a point but you don’t want to overdo it. If you bring more than you need you’ll make the page slower.

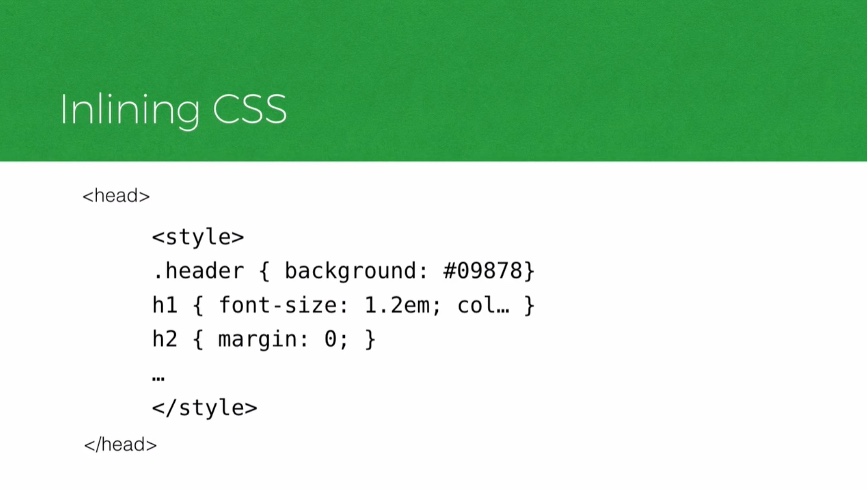

- Inlining Files – if your CSS is small enough you can inline it right into the HTML. Google has been doing this on Google Search Results for decades. It just works. This does require changing the HTML itself.

- This is bad for caching.

- There are ways to work around the caching downside if you’re interested – there’s a blog on the filament group’s site. Essentially it uses service workers to move inline CSS to their own files in the browser cache so they can be used/requested on subsequent pages.

Largest Contentful Paint (LCP)

The render time of the largest content element visible in the viewport. This is when the visitor starts to perceive a usable page.

Images – Don’t just optimize images, make them responsive. Offer different versions of them based on viewport size, network conditions, etc. You can send different

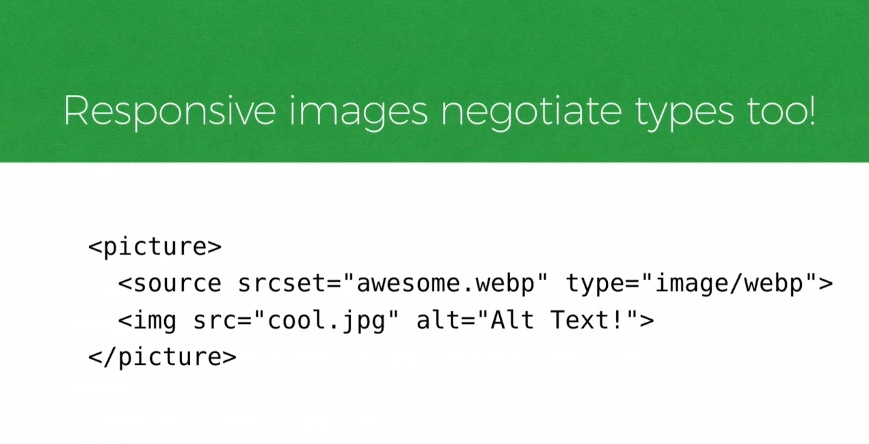

Image sourceset (srcset) is his recommended way to do this. Picture is more prescriptive (a little more control for cropping here).

You can also use responsive images to negotiate types.

Browsers are going to download images they find int he HTML unless you tell them otherwise. There is a native way to tell the browser to wait to load an image. You can add a loading attribute and set it to “lazy” and the browser can decide to load it when it becomes visible.

Video – it’s important to offer up different formats to the browser. The VIDEO element is what you need (similar to PICTURE) in the makeup language. You can specify multiple sources and offer up different sizes of the video. Also don’t forget to caption your video! This is essential accessibility – you can include a TRACK element that includes a VTT file for that video. Don’t forget to include this!

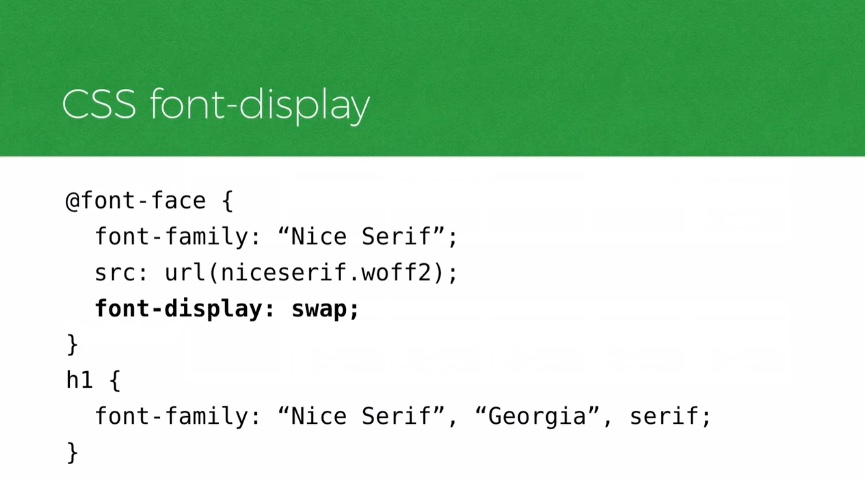

Fonts – Many browsers hide the text of a page while a custom font is loading. This delay happens on most browsers for up to 3 seconds. One way we can show the text sooner is to aim for a more progressive font rendering process. EX:

Lastly, a MAJOR problem source: Over-reliance on JavaScript to generate content in the page.

This is a huge blocker for accessibility as well because the screen reader can’t read what’s on the page unless it’s generated. The code for this problem looks like an empty container element on the page and then some client-side javascript that is consuming data from an external feed and GENERATING the HTML on the client-side. This is an anti-pattern for performance.

Fortunately today’s frameworks can work around this problem by rendering on the server and delivering that HTML to the browser first.

But as we do that we have to be very mindful about how much JS is required to take that static representation of the page it first renders and make it interactive.

Time to Interactive (TTI)

The time at which a page becomes interactive.

We have to cut back on the amount of JS we’re using to make a page interactive. Minifying, reducing whitespace, removing functions when we can, and leaning harder on HTML!

Oftentimes we don’t need to rely on JS to create custom elements. We often see framework driven approaches where JS is used to create custom versions of controls that we DO have natively in HTML. We should look to use those!

We need to reassess what is available to us in HTML natively before we rely on JS because there are huge impacts on performance and accessibility when we rely on JS.

We need more inclusive web performance metrics. In our ongoing push for practices that produce inclusive and accessible experiences by default, we need our performance metrics to be inclusive as well. By optimizing for early paints, things can look a little rosier than they actually are.

We are reassessing the status quo and challenging the ways we operate so we can create a more equitable world.

If a site isn’t accessible, it isn’t done yet.

@scottjehl

scottjehl.com

0 Comments