April 4 @ 9am

An Event Apart Seattle 2018

WHAT MAKES US HUMAN?

Who thought of creativity? Empathy? Love?

Sure some animals exhibit those characteristics as

How about… did anyone think of checking a box? NO OF COURSE NOT because that’s absurd! What could we possibly prove about our humanity by checking a box?

Yet we encounter this all the time.

She’s kind of a collector of meaning and fascinated by that subject.

Absurdity and meaning are sort of in this opposing relationship to each other. Where there is a lack of meaning defined, it creates this void into which absurdity can flow.

So her answer to the question ‘What makes us human?’ is that Humans crave meaning (we seek if everywhere).

She was a linguist by education, so she approaches this from language:

- semantic

- significance

- status

- patterns

- truth

- purpose

- existential

- cosmic

At some level we all care about meaning.

Why this matters now is that we are entering a time where increasingly machines are changing the work that we do.

Meaning has played a really big part in how we approach our relationship with work. We take meaning from our work. Job titles have become last names and handed down! Baker, Blacksmith, Judge…

So what will it look like as machines change the composition of the work that we do, or if they even START doing our jobs?

We need to think about how to inject more meaning into the work that we do so as the composition of our landscape changes, we are surrounded by meaning that we have designed and built. The machines don’t understand meaning, we do.

Also… the ‘I am not a robot’ checkbox isn’t even safe. A robot can do that.

It’s important to recognize that all of the technology (every part of the infrastructure being built around us) has the opportunity to create these greedy, manipulative experiences that are meaningless experiences. Not good. But also the could be poorly planned, meaningless experiences, also not good.

The Tech-Centric Model:

Asks how to be more effective and more efficient but in a completely profit driven way. This leads to the opportunistic maximization of human motivations that are NOT our best motivations.

She does believe it’s possible and important to use this technology to make the most meaningful, humanistic world possible. If we’re all tech humanists, she would love that.

We talk a lot about improving the user experience, the customer experience, the reader experience, etc. But those are really just ROLES! If we step back from the roles we can look at the HUMAN experience.

The Human-Centric Model

The alternative to the tech-centric model. She works with companies to ask how can we create meaning from technology for humans. Think about the data and technology power and how to make it make sense for them but in a way that creates a better possible future world for humans.

Every solution we create has the ability to scale wildly because of this technology. She’s using data and technology to scale what is meaningful. We’re measuring profits to keep the lights on, but if there are no humans there in that room why do we care if the light is on?

Scale is usually about removing hard limitations so a new startup can grow and flourish.

The difference between growth and scale – Growth is really about one metric (increasing profit, or increasing user, etc).

When you’re talking about Scale, you’re talking about all these metrics in concert with one another.

All of the things we build are going to have increasing resources fighting against them. It’s important as we think about this that we automate the meaning.

DON’T GIVE ABSURDITY A CHANCE TO SCALE. It will creep in and be like a weed you can’t get out.

Amazon Go example:

“When you take something off the shelf it’s added to your virtual cart, so don’t take something off the shelf for another person.”

… well what about in the future when Amazon Go or a checkout-less shopping experience is the norm? This seems like a little decision BUT if this is the norm, we will have a shopping experience where nobody helps anyone else! She likes helping others at the grocery store!

Uber example:

They were collecting user’s battery level along with other data when hailing a ride, and they found from research that people with very low batteries were more likely to be willing to pay surge prices. They swore they weren’t using this data in production but YUCK!

So, as you’re approaching your work, ask how you can create meaningful and integrated experiences?

<PIXELS AND PLACE> Her book discusses this

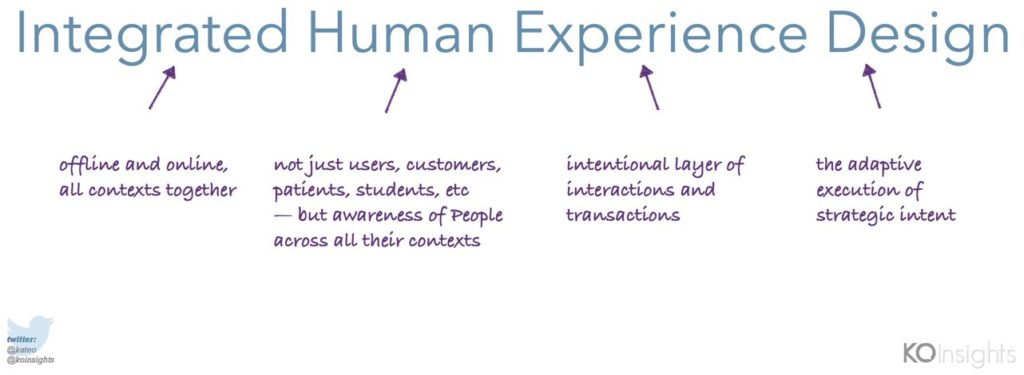

Integrated Human Experience Design

She defines Design as the adaptive execution of strategic intent.

You can have a North Star but you’er still going to want to do adaptive execution against it. Adjust, iterate, learn.

Some of the key elements are:

- integration

- dimensionality

- metaphors and cognitive associates

- intentionality / purpose

- value and emotional load

- alignment

- adaptation and iteration

(these are all detailed more in her book)

“All models are false but some are useful”

-George E. B. Pox

She’s just trying to be useful by providing these guidelines.

It’s human nature for humans to drink water. But what format that water is in, how it’s delivered, and how that makes us feel about us and the world is ‘the shape’ of that experience. So bottling up water in a heavy, glass bottle with a minimalistic, cool typeface makes her feel very important… but she’s still a human drinking water.

As the shape of our surroundings change, the shape of our experience changes, too.

This is literal but also metaphorical.

Human experiences do evolve but the SHAPES change more quickly than the nature. The more you can root your work in nature and meaning, the more it’s going to be steady and consistent and people will connect more with it.

Metaphor: it’s about creating a place in someone’s brain for them to conceive of the thing you’re giving them to do AS IF it’s another thing. It gives them a whole other set of vocabulary and visual constructs to think about that thing with.

Metadata: how you frame something. We want to have some sense of the data boundaries happening around an experience.

airbnb example:

‘Don’t go there, live there’ – a metaphorical campaign to experiencing the city like you live there, not like you’re learning. If you went online to trip advisor that is tourist focused and ask what the top 5 sites to visit in Paris are, it’s a completely different list with the exception of Luxembourg Garden than that provided by airbnb where the LOCALS provided their top 5 things to do. The answers (the metadata) are different because the metaphor is different.

It’s important that we think about this dimensional approach because the design of experience online now regularly intersects with the design of experiences offline. Everything you experience in the physical world with your phones or computers or wearables (all with geolocation capabilities) is completely intersection your online and offline experiences.

Carnival Cruse example:

Carnival Cruises’s Wearable (looks like a watch) is supposed to be like a ‘digital concierge’ for guests.

So people should be able to pay for their food and drinks, provide navigation on the ship, provide keyless entry on rooms, and this all requires the use of data and metadata in a meaningful experience in the human world.

They’re creating a relevant experience. Relevance is a form of respect. They’re saying ‘we know what you want to do and we want to get out of your way to doing that.’

We also have to be mindful to not overstep or be creepy…

Starbucks example:

Their app is very dimensional the way that they’ve woven together the navigation of physical place (walking down the street in Manhattan) and decide you want a latte (which she often does), and pick a location, put stuff in her cart, pay for it, and then she can walk in and pick it up and stroll out.

All the while there’s a dimension of time being pointed out to her, very helpfully pointing out to her that her timing aligns. That’s a very helpful little bit of data that helps her make her decisions as she’s paying for coffee in the app. It just takes thinking about the user / human context and what you want to have happen for them.

Just about everywhere the physical work dan digital world connect, the connective layer is THE DATA CAPTURED THROUGH HUMAN EXPERIENCE.

WE are what are making this happen – bringing the digital and human world come together.

This is because Analytics ARE PEOPLE.

When analyzing business data, and the reports and graphs you look at, constantly remind yourself that data is representing the needs of REAL PEOPLE telling you what they want and need, and what you can do for them.

When looking at these examples, let’s ask ‘can we trust this data?’

Example: Tay was an AI built by Microsoft that was supposed to interact with people on Twitter.

Within a day of dealing with the most terrible users of Twitter, she became an anti-Semitic, racist, homophobe. What she was tweeting was terrible!

We can’t blame Tay because machines are what we encode of ourselves. We create the algorithms and make the decisions about the logic.

So ‘Can we trust the data?’ really is ‘Can we trust HOW WE USE and HOW WE ENCODE the data?’

The opportunity we have NOW and NOW from now on is to build meaning at scale. We are going to be encoding decisions that are potentially affecting vast numbers of people in vast ways. Every one of our decisions can have echoing effects. But this isn’t meant to put pressure on us.

Google feeds their AI romance novels because they want their AI to understand deep, passionate feelings!

Walc app provides human-like directions that provides human-like directions (turn left after the Apple Store, and again before the Chase Bank). Cool! That’s the right idea.

Nuances of subtle interpretation and humanized tech: We can tell the difference between –

Barn Owl or Apple

Muffin or Puppy

Labradoodle or Fried chicken

but a machine might not be able to.

Where can we add the most value? BY BEING HUMAN!!

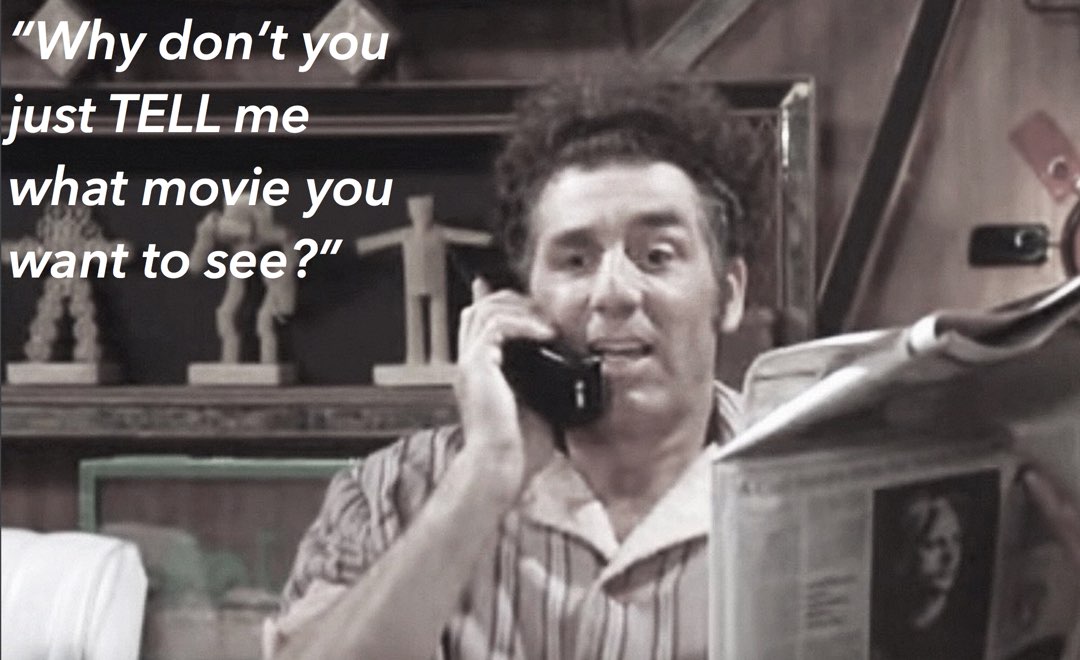

The Moviefone Kramer model –

Kramer gets a new number and it’s 1 digit off from Moviefone where you actually had to call to see what movies were playing at your local theater. At one point he decides it’s just easier to pretend to be Moviefone but can’t understand touchtones so he just asks ‘WHY DON’T YOU JUST TELL ME WHAT MOVIE YOU WANT TO SEE?!!!’

The more you go about the work you’re doing today, the more there’s going to be a need for things like ChatBot.

Where can we add the meaningful understanding of nuance and let humans do what they do well?

How do we repurpose human skills and qualities to higher value roles? It’s going to happen by us solving this design problems in front of us by building human meaning into them.

Let’s create a future of human experiences the don’t foster absurdity, that preserves meaning; a humanity where our work has built meaning that can scale.

Ask yourself, ‘What are you trying to do at scale?’

For her, as a tech humanist, that answer is ‘to create more meaningful human experiences.’

@kateo

How do we sell this to the people holding the purse strings?

Sell it by talking about automation as an inevitable force, and that purpose and clarity in an organization are what bring meaning.

Is there a gap between the way people have always done things and how it should have always been?

Yes, and there’s a lot of compartmentalization that work invites. She knows someone who goes by one name at work and another name at home. So try to become integrated everywhere, even with yourself. The more we can think about this integrated world view the healthier we’ll be.

How do you see absurdity once you think perhaps it’s taken hold?

You can step back to look at purpose. Look at what the business is uniquely in operation to do. It’s not a mission statement, it’s a purpose statement, maybe 3-5 sentences that is very direct (like Disney’s ‘Create Magical Experiences’). If you can be that crisp and clear, everyone in the organization can connect to that. That will remove the absurdity because we’re not dealing with double speak anymore. It’s like a detox… like juicing for business!

0 Comments