Preston So| Senior Director, Product Strategy, Oracle

An Event Apart Online Together – Fall Summit 2021

2:30pm Central

Intro: Preston So is a product strategist, developer advocate, digital experience futurist, researcher, speaker and author of Decoupled Drupal in Practice (Apress, 2018) and Voice Content and Usability (A Book Apart, 2021).

Preston is Senior Director of Product Strategy at Oracle, where he leads product strategy and developer relations for the Oracle Content and Experience team. He was also editor in chief at Tag One Consulting, where he leads content marketing, and hosts tag one team talks, a bi-weekly webinar series about emerging web technologies. He’s been a programmer since 1999, a web developer and designer since 2001, a creative professional since 2004, and a Drupal developer since 2007. Please welcome Preston So

_______

Hey events apart! Hope everyone’s doing okay. Welcome to Elements Heard, Not Seen: Moving from Web Design to Voice Design. Let me start off this presentation right away with a little bit of a rhetorical question, but I really do want you to think about this.

You know how your website looks. you know how it behaves. You know how it operates. You know how it interacts with your users and how it actually works from the ground up. But let me ask you a question. How would your website sound if it were actually a voice interface like an Alexa assistant or a Google Home, or a Siri application? How would your website sound? How would it communicate?

There’s one big problem with designing for voice that we don’t really understand as web designers and web practitioners and that is that designing for voice interfaces, has no visual canvas per se. There’s no Photoshop, there’s no figma, there’s no visual prototypes to speak of. As a matter of fact. So how do we actually design for voice interfaces, when all of the skills that we’ve gathered over the years and all of the capabilities that we now have focus solely on, and our bias towards, the practices and paradigms of the web. So to move from web to voice, here’s the question, “how can you leverage your existing experience, your existing knowledge about the web, tomorrow, so that you can go ahead and begin working with the web today?

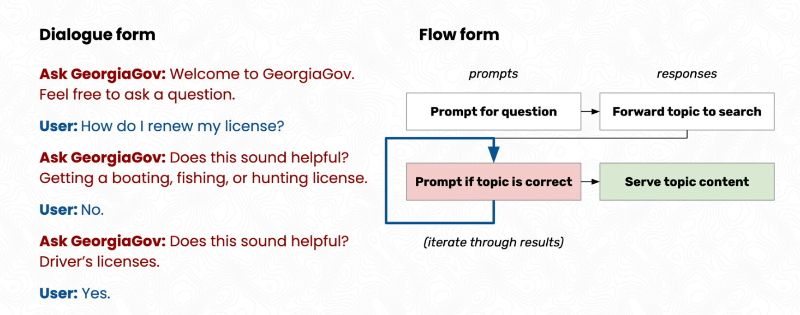

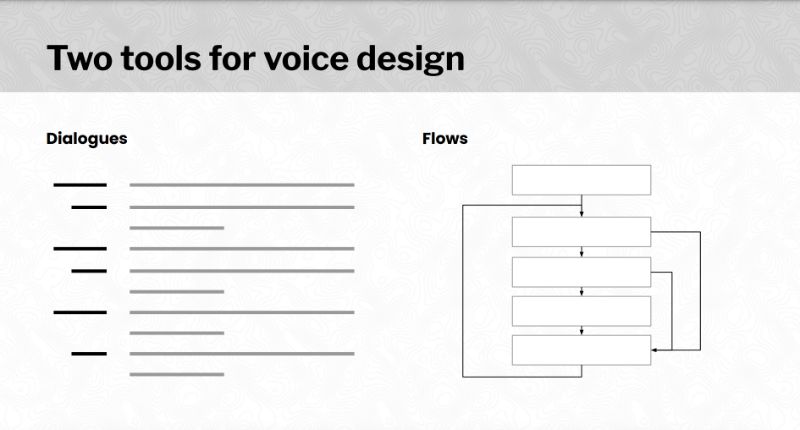

Well, here’s what we’re gonna cover today. The first thing I want to do is I want to have a little bit of a conversation about conversation. And I want to talk about what makes voice interfaces so different from the web, and why we should be thinking about verbal equivalents of our visual elements or visual events on the web over some of the really challenging ways that we translate our web ideas over to voice interfaces. I want to talk about moving our design thinking from web to voice. What are the specific elements and the specific tools and tactics and strategies and practices and approaches that we need when we move from web design to voice interface design? Then I want to zoom in on a couple of very important aspects. If there’s one takeaway that I want you to leave this session with it is this. When you create a voice interface if that’s based on your website or based on some sort of a checkout flow or based on some sort of delivery of content from your website, there’s two things that matter most. And there’s two things that you’re going to have to keep in mind, anytime you put together a voice interface for your own purposes. And that is 1. a set of dialogues which represent the kinds of conversations that your voice interface can have, and 2. voice interface flows, or call flow diagrams which I’ll get to in a second, which really outline the structure and articulate the ways in which users will journey across your interface.

Finally, I want to talk about some of the things that are design issues in voice that really aren’t necessarily a big deal in a lot of the previous voice interfaces that we’ve worked with. One good example, by the way of a voice interface, is the venerated screen reader, which is another topic that’s being talked about here at an event apart, but I’ll explain a little bit about why it is that we’ve got some other design problems, to think about in voice that haven’t really surfaced over the years, when it comes to screen readers.

So let’s start with voice versus the web. What does it mean to be verbal over visual, what does it mean to loosen all of the ties that we have and sever all of the different kinds of things that we understand that connect these various elements together like links and calls to action and nav bars and sitemaps, and move away from those paradigms into a paradigm much more appropriate for voice? Just as a quick Preface. I want to cite Erica Hall, who wrote the foreword for my newest book from A Book Apart Voice Content Usability. Content Conversation is not a new interface. It’s the oldest interface conversation is how humans interact with one another and have for millennia. So what does that mean for us? Well, voice interfaces are unique because, unlike other interfaces, they employ our natural instincts, over acquired skills. When we look at a website and viewport when we look at mice and keyboards and video game controllers and screen readers and refreshable braille displays, all of these things are artificial inventions. They’re skills that we acquire to get that words per minute up to that level, we have to practice. To learn how a website is structured and understand where a header and a footer and a navbar is and what a sitemap is or RSS feed is, those are acquired skills that we develop over time. But voice interfaces are very unique because we don’t actually need to necessarily learn a whole lot to have a successful conversation with a voice interface. It’s the machines, the interfaces that are playing on our own terms, rather than us playing on the machine’s terms. So voice interfaces are unique and pure voice interfaces are even more unique. These are, of course, voice interfaces that lack a screen altogether and have no physical or visual component that we already have already made verbal representation of visual websites and you might be wondering, we’ve got two talks here at this conference one that deals with voice interfaces like Alexa and Google Home and Siri and another that deals with screen readers. But the thing is that screen meters are very, very different from voice interfaces ready-made verbal representations of visual websites are not quite the same thing as voice interfaces. So Chris Murray writes this from the beginning:

“I hated the way that screen readers work, why are they designed the way they are, it makes no sense to present information visually. And then and only then translate that into audio. “

He has a point. I think it’s very important for multimodal accessibility for everyone and every organization, every website to have available and make available a screen-reader compliant WCAG compliant experience for users who prefer the screen reader over having to use other alternatives, which might not actually exist. But of course, the issue that screen readers present is they’re fundamentally second-class citizens. They’re fundamentally not presenting web content or web experiences in a way that makes that oral and verbal experience first class. In a sense, they’re really abstractions of the visual representation of our pages and we don’t necessarily want that. and he writes in Wired magazine that this is not something that really works, designing for voice, and of course, I’m talking about designing for voice and not designing semantic HTML or writing semantic HTML and using ARIA roles for screen meters. I’m of course talking about making a first-class, oral interface that is completely rooted in the world of audio and sound and voice. Not a second rate hybrid interface that forces us as users to actually interact with both a visual equivalent in its lesser form and oral equivalent. So designing for voice interfaces requires two things and I want to talk today. Over the course of the next 45 minutes that I have with you about how you can write natural-sounding dialogue, and how you can structure those dialogues into natural-sounding flows, but I want to do this not from the standpoint of somebody who’s starting out from scratch, because many of us who are here at An Event Apart, don’t actually have the ability to just spin up a voice interface on our own and figure out how to make it work. And believe it or not, I also had the same experience in my previous roles working with how to convert a lot of the websites and a lot of the web content that we’ve already built up over the years into a new realm where we can talk about how to make voice interfaces work from the standpoint of the websites that we already are rooted in, and where we’re already situated to begin with.

So how do we move our design thinking from web to voice? What are some of the things that we need to think about when it comes to turning our knowledge about websites and our knowledge about Web Elements and the things that make websites tick to voice? We all know about these motifs on the web. These visual elements that make sense to all these web users, we have web links, web pages headers, we have call to action forums navbar, Sitemaps, RSS feeds,all of the things that frankly, voice interface users cannot interact with.

I have two tips for you that you can take away, starting tomorrow. The first is, let’s reorient our design thinking, away from these artificial visual elements that we are used to, within the context of a website over towards natural verbal elements that we use in daily conversation, the very first tool that we’re going to deploy and use is dialogue and dialogue is, of course, the way that conversation works across every single human conversation there are four key elements of dialogue that identified my book Voice Content and Usability as well. The first is onboarding, and that’s just that simple greeting that initial introduction that we have to make as we start off a conversation with anybody, but every single conversation that we have with each other or every single conversation that we engage in with a voice interface has certain cadences and certain devices that are required in order for the voice interface to understand what we want. An example of this is a prompt. We’re going to ask somebody a question, we want to have a response from somebody we ask how somebody is doing you asked if they want their change back in fives or in ones. And we also respond by saying “yes” or “no”, or saying “I didn’t quite catch that”. And then of course there is the overarching purpose or the overarching motivation or rationale for the conversation, which is the intent and identifying the intent or what the user wants, the destination that the user is trying to get at, is one of the most important elements of dialogue. Now we’ve got some tons of dramatic motifs and these mainstays of the web that we’ve seen for a very very long time. Pages that have a title at the browser top toolbar. We’ve got headers, we’ve got sections, we’ve got asides. We’ve got text formatting, you’ve got bold facing, we’ve got emphasis we’ve got underlining which should really only be used of course for links. We’ve got links, calls to action forums. We’ve got nav bars, menus. We’ve also got alert boxes and air modals and all of these things are things that we’re used to using on the web, but in the voice interface setting, you really only have access to these four things which we can consider to be utterances or elements within a dialogue: onboarding prompts, intense, and responses.

All elements that we use in other words in web design have certain equivalents, they might not necessarily overlap very nicely, but they do have analogs in dialogue writing and all events that we enable in web design also have equivalence in the structural kind of component of voice interface design that lies right alongside dialogues, which are of course, voice flows. So the second tip I have for you that you can take away tomorrow is, if you want to take your website, and turn it into a voice interface or if you want to understand how to translate your web design thinking over to voice interface design, the second tip I have for you is reoriented your design thinking, away from visual documents and space toward oral events in time, as a matter of fact analyst apart Erica Hall has this to say,

We need to have a shift from thinking about how information occupies space, namely documents like the documents that we look at in screen meters or that we look at in browsers or microfilm archives, to how it occupies time as events.

Of course, we do have a sense of events within the web. We know what it’s like to view something we know what it’s like to click on something or tap on something or swipe on something on a mobile device or hover or scroll or fill in forms, but in voice, there’s really only one type of event, which is what the voice interface will do in response to the things that we say. The utterances that we issue. The dialogue elements that we have this interplay with when it comes to the voice interface. So voice effects are really about decisions that the interface does in response to what we are saying, back to them. Now if this seems a little bit strange. Let’s go through a little bit of this in finer detail.

Our second tool, of course, apart from the first tool of dialogue is flows and flows comes from the term call flow diagrams. The earliest phone hotlines that you would call an airline for or a hotline or a hotel conglomerate for to reserve a room or reserve a flight. Generally speaking, if you’re calling a customer service hotline, you’re generally going to be moving through a call flow diagram that’s been baked into and programmed into that particular voice interface. So what does this call flow diagram actually entail? Well, there’s of course, a series of elements that we should discuss when it comes to flow diagrams. Number one, of course, these nodes and arrows that reflect the same kinds of moving between elements that we do on a regular basis. If we click on a link, if we respond to a modal if we scroll to another section of the page to look at a different kind of content under a different header, that’s very similar to the ways in which we modulate our responses to the interface, and of course how the interface is going to respond by the nature of how we actually confirmed or responded to a certain prompt, to guide us to another decision state. It’s where the interface decides to take us. So these individual nodes which are connected by arrows are very similar to the kinds of user flows and flow journeys that our users take through mobile applications, through web applications, through websites, but they’re very different because they are much more finer-grained, you can’t necessarily display a whole lot of information within a single decision state, which is a point at which the machine or the voice interface has to make a decision has to make a choice about what to do next.

Before we move forward though I want to just discuss a little bit about how you can apply this right now to your daily workflow and let’s talk through how you might write a dialogue for your own website. Let’s take for example the georgia.gov website. Now for those of you who don’t know georgia.gov is one of the most forward-looking websites when it comes to not only accessibility, but also when it comes to structured content, when it comes to content delivery, to a whole variety of different applications and experiences in a way that’s really really conducive to creating a voice interface that’s based on a website, and of course that’s why we’re here today is to learn about how we can take our existing knowledge about website design and website structure into the context of a voice interface.

So for Georgia what we did is we took all of the FAQ content that’s available through that popular topics link that you see at the top of the page, and made that available through Amazon Alexa georgia.gov has certain sections that it deals with every single popular topics page covers a certain function or a certain category of issues that Georgian residents have to deal with on a daily basis. Things like business licenses or name changes or saving for college or voting, and our very first thing that we have to do is voice interface designers if we want to take this information and translate it into a voice interface from the context of a website is to convert this kind of hierarchy this kind of structure into a structure that makes sense for a voice interface, and of course, that begins with the process of writing a dialogue.

Further down the page here if I click on for example the voting page which you see at the very very front here. There’s a topic title, which is of course that main idea of what this page is going to cover. We’ve also got a what you should know section that includes an introductory paragraph, and a few bullet items about what you should know about this particular topic. So let’s start writing a dialogue, and every single FAQ, for example, which is one thing I missed here is that we have a question and answer coupling, which really involves even further deepening of the topic, to the point that we understand much more about the topic than we would have otherwise. And every single one of these pages, the voting page, for example, contains not only a what you should know section that covers the most important information along with that introductory paragraph, and a series of frequently asked questions that also are deeper in the hierarchy of how the page is structured.

Now, if we’re looking at this from the standpoint of a web browser, we just simply scroll down the page. We look at the initial information we get that information settled that context that we understand settled, we scroll down further, we look at some of the other information and we have a lot of information now that we have access to. But we can’t present an entire page when it comes to a voice interface, And if you’re building a checkout flow for example or some other transactional kind of interaction with your users. It’s also very difficult to actually display everything on the page like you would in a wizard or in a single page application that displays all of the steps in your checkout process. So we have to split this up into different elements of dialogue, and we have to understand some of the things that we have to do to make this work within the context of a voice interface. What I’m gonna do here is I’m going to show just how we wrote some of our initial dialogues here before digging in and sort of helping us look at what are some of the elements on the web that we should be paying attention to when it comes to looking at dialogues and looking at voice interfaces from the context of web design.

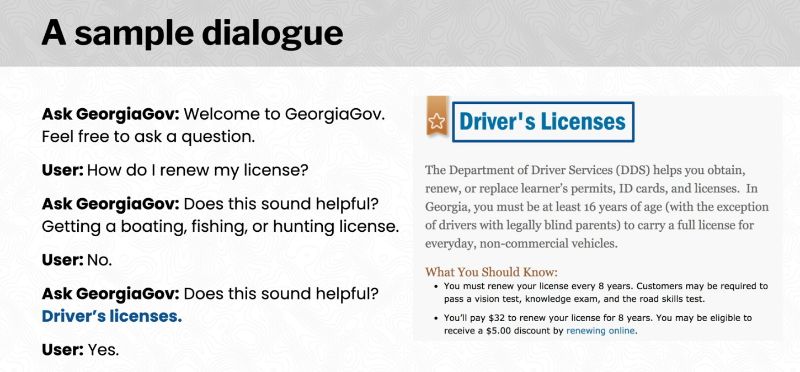

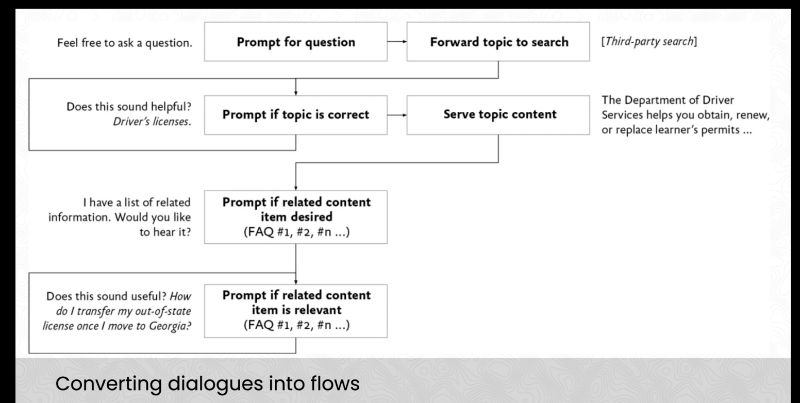

Here we see a sample dialogue that focuses on how to choose a topic. Now all of this text for example, is in the voice interface but it’s not actually represented within the website. It’s all meant to help the user understand what the purpose is and to get the user to the point where they can actually interact with the user interface, but as you can see here the first section of this dialog allows for the user to respond to search results that the Astoria gov user interface has provided. Does this sound helpful? Driver’s licenses, and now we’ve identified that page on which we should be going next. The next part of the dialog delivers information from that page, namely the what you should know section and then concludes with, I have a list of related information. Would you like to hear it, the user says yes affirmatively. Does this sound useful How do I translate, how do I transfer my out-of-state driver’s license, once I moved to Georgia? Of course that’s further down the page, if we go down the page we can see that that is one of the questions that has been asked of us as a state government entity is, how do people renew their out of state driver’s license to transfer their out of state driver’s licenses into Georgia? Now of course we have to transmit this information after the user has said “yes.” And now we have of course a further dialogue, a further utterance that allows for this voice interface to share exactly the information that the user wants. So this is how you go about writing a sample dialog and I encourage all of you tomorrow even if you have some time, to consider what dialogues, you can write based on the content that you provide in your website or based on the transactions that you enable in your website, if you have an order form a checkout process an E-commerce site, or if you have a content site that includes an about page, how about you write a dialogue that allows for users to traverse the information hierarchy that is represented on your website, in a way that makes sense to a voice interface. Let me give you some help with this, and let’s talk through some of the ideas that we can work through. But first, a couple of things to remember before we move into flows and move into some of this more complicated material.

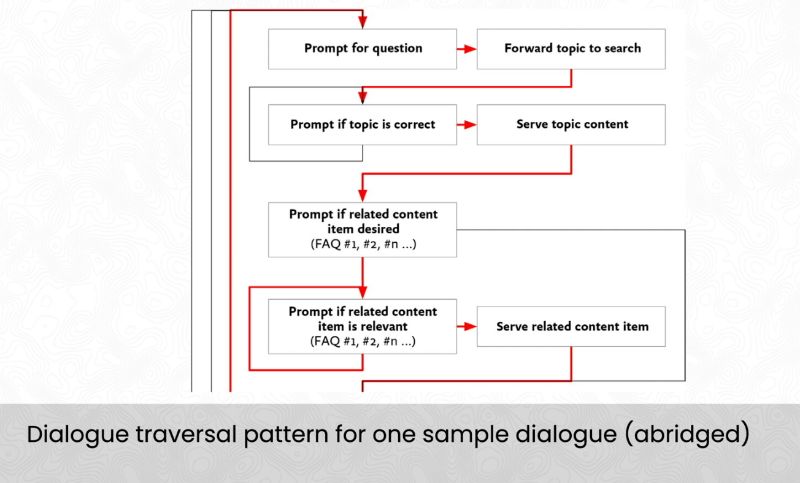

A single sample dialog, represents a single flow for versatile pattern, which means that a single dialogue is really one way of going through that flow diagram that I mentioned earlier, and multiple sample dialogues, just like the one that we wrote. Make up a single call flow diagram. For example, let’s just say that we wrote a call flow diagram.

I’m showing you this right now because I want to help familiarize us with how this looks. As new voice interface designers. Those of us who are web designers, probably have never seen anything like this before. It’s very unusual. It might also be one of those flow journeys that we see in mobile applications and so on so forth. But for voice interfaces, it’s very different, but as you can see here what we just did right now with this dialog that we wrote is to traverse one of these routes through this labyrinthine call flow diagram that we’ve put together.

Now, I’m going to walk through how you do all those steps in detail, while also mapping back and connecting the dots to all of the things that we know and love as web designers on the web. So the key takeaway here is there are two tools for voice design, there’s dialogues and there’s flows, and as Randy Harris writes in voice interaction design, “you can’t really build one in isolation from the other you can certainly start out with sample dialogues and that’s where I always recommend everyone starts out, but eventually you’re going to have to start designing flow diagrams that represent those dialogues and tweak those interchangeably as you go through.”

So designing for voice interfaces once again means writing natural dialogue and structuring natural flows, but what does it mean to write an effective dialogue? What does it mean for us to translate our website structures and our website elements into voice interface elements that make sense within a dialogue? So once again let’s review the four elements of dialogue that I cite in my book Voice Content and Usability. There’s onboarding, there’s the orientation of the user which is accomplished in various ways on our website. There’s prompts, there’s various ways that we elicit User Responses whether it’s to click on a link or fill in a form or do something else, that requires us to prompt the user to do something, so that we can respond to what happens then of course there’s the intent that the user has that we have to understand we have to identify what it is that the user wants and This normally takes place through the user’s actions of course, on a website, but it’s much more complicated within a voice interface but it’s just as crucial.

And finally of course we want to be able to deliver information and feedback if there’s an error for example we want to show a modal, we want to show an alert box that says hey you made a mistake, or I don’t understand what you’re trying to do here. Tell me, once again, how I can help you, and what we can do to fix this. So responses are very important as well because not only are they of course the most important delivery mechanism for any type of content, they’re also very important for helping users understand how to perform transactions, and whether those were successful or failures.

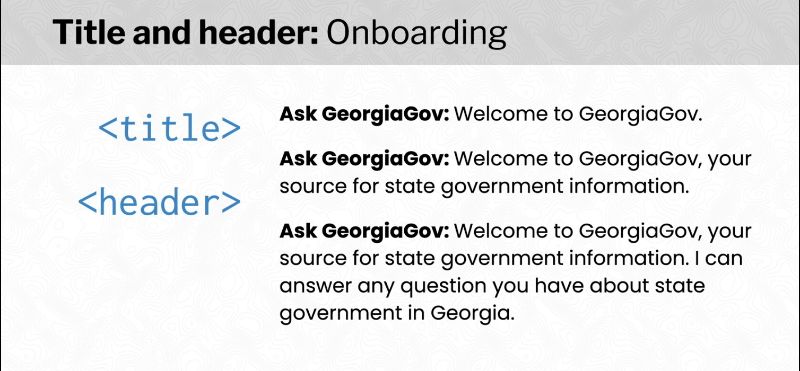

So let’s start with onboarding, well, the typical onboarding that we normally do within a website is using the title within the HTML that we write, or a header element, for example. It could also be some sort of a div that contains an intro paragraph or a set of information within a section element that really focuses on what’s most important about this page and there’s several ways to do onboarding. You can take a look right now at some of your own titles and headers within your website and connect the dots over to how you might write a similar title or header or onboarding for your voice interface dialog. For example, you probably want to keep your voice interface onboarding relatively short, because your title and header are also relatively short. For example, saying something like, “Welcome to Georgia gov, your source for secret state government information is a perfectly fine explanation.” And if you go a little bit further than that, the longer you go the more the user’s eyes will glaze over. Keep in mind, as Erica Hall mentioned earlier, that we’re not thinking about documents and space we can’t just do a flick of the mouse wheel and scroll past it. We’re kept captive by time spent actually reading through a lot of the information that we’re meant to present.”

So your onboarding should be shorter rather than longer, and I recommend sticking to something like the second or third onboarding you see here where you’re very very clear about some of the states that you can assign so it’s the state government information that you can provide in the case of georgia.gov. But also, most importantly, what you can do for the user. Stating your purpose is very important. Now we use for example, the very first one here, because people who are coming to Alexa, usually have a sense of what skill or what application they’ve just installed from the Alexa marketplace.

What about text formatting? Well, this is very important to dialogue, right, I think one of the things very, very crucial when you write website texts, is being able to use effectively, these text formatting and style elements like strong, em, small, even (Perish the thought) inline style elements. But here’s the thing just as web designers and content designers use text formatting and style and font changes and larger text to inflect and modulate how the text reads and is visualized on the page. Well, voice interface designers inflect responses that they issue in the form of conversational markers and other cues in the form of sound gestures like Klaxons or alarms or whistles to inflect and modulate how text sounds to the user. Keep in mind in a pure voice interface, there is no visual out there, is no visual screen, and there is no physical tactility that you can leverage within this sort of a voice interface which means that a lot of the information and the emotional context, the narrative context that we have to provide within our visual formatting has to be presented through, of course, the dialogue itself.

Now for most voice interfaces, you really want to stick to a couple of best practices for dialogue writing, you want to keep it short, you want to avoid lengthy sentences, as I said earlier with onboarding, unfamiliar terminology, and too many options which we’ll talk about in just a little bit. You also want to make sure that dialogue sounds as natural to the human ear as possible, and that means that some of the things that you’ve written on your website might not necessarily be appropriate for a voice interface quite yet because of the fact that you’ve written it for an audience that’s going to be reading, not for an audience that’s going to be actually listening to this text, and designing for ambiguity is very important to voice interfaces can’t just display a list of options and I’ll talk about this in a little bit later as well. The more ambiguities that are present in a dialogue, the more difficult it is to conduct disambiguation in a natural way.

You also want to support corrections, and this is an area that we’ll talk about with error handling in just a little bit, but even if it’s easy for the user to start over from the very beginning you want to make sure that users can very easily, issue a correction and fix a mistake that they’ve made.

Also, timing is important, time is one of the key means by which voice interfaces can approximate physical space. Like negative space, silence is very effective but positive longer than 400 milliseconds sound very unnatural in human conversation. But there’s certain things that we can do to inflect the narrative of how we work with voice interfaces, and that is of course conversational marketers. Conversational marketers are linguistic elements that bind together chunks of conversation into a cohesive narrative and these are the ways that mimic the styles and formatting that we apply to our text, that allow for the user to understand, “oh this is where we’re moving into a different part of the dialogue” or” this is where we’re moving into a very different part of our voice interface”. There’s some portal markers like: first and last and previous to next and almost there, and finally. There’s acknowledgments like: Thanks, got it, more understood, sorry I didn’t catch that. And of course affirmative feedback that helps the user understand that they’re using something that isn’t really just a website that’s reading out information, it’s really about providing those narrative forks in the road, just as our visual layout elements do you want a website that allow for the user to understand that they’re moving into a different narrative state, just like the ways in which we format text, and we style our texts to reflect how these narratives change as well.

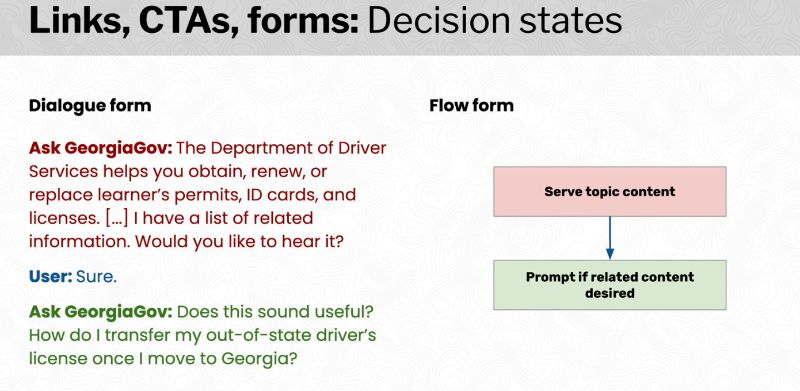

So let’s talk through some really deep things like links, calls to action, forms. These are prompts in voice interfaces, if you click on a link, if you click on a button, if you fill out a form, if you fill out a text area, if you fill out a text box. These are all ways of filling out the responses to a form. And these are really the ways in which a voice interface is able to gather and understand what it is that you want, as a user. So prompts are another key dialog element here because we’re actually asking you to provide some input just like the link encourages you to click on it, just like the button encouraged to click on it, just like forms encourage you to fill, it out and submit the form. So, one way to do this is to just do what’s called a non-directive prompt, like “Welcome to Georgia gov, feel free to ask a question,” with no example, but a directive prompt that really gives you a very clear example of what you might be able to respond with, is a very good way for a voice interface, user to feel like they have some skin in the game and they understand exactly what it is that the voice interface wants, for example. “Welcome to Georgia gov, feel free to ask a question like: ‘who is the governor of Georgia’, or ‘how do I register to vote’?” But there’s another form of voice interfaces dialogue elements that are very important within the realm of how we deal with links and calls to actions and forms, and that is of course, intense.

Now, the only way for a voice interface to understand the sort of goal that a user has is through the user’s utterances, and their own responses to prompt, but of course on a website, we understand the user’s intent based on links and buttons and we have all sorts of analytics that help us understand, oh well they submitted that form they had an error on this form, we understand exactly what the intention of the user often is by interpreting what the user wants to do from those visual and physical interactions that they have completed.

In the voice interface, however, we only have the users utterances that we can interpret through the speech understanding and the natural language understanding that’s built into our voice interface to really have a sense of what it is that the user wants to do to accomplish their goal. For example, if the user asks, “How do I renew my license”. Well, askgeogia.gov, the voice interface has to understand that utterance, and then conduct a search based on that. In our case, that allows for them to provide a confirmation prompt, like, Does this sound helpful getting a boating, fishing or hunting license. Well, that’s not correct because I want to renew my driver’s license. The user says “no”. Does this sound helpful for driver’s licenses? So there’s a key process here that is really, really tough for a lot of people coming from web design to understand and this is where I want to stop for a moment and talk about intent identification. There’s a much longer explanation of this in my book, but intent identification is the process of understanding what the user wants, when it’s ambiguous or uncertain.

Let’s go back to that last example, as you can see here, our question was actually a little bit ambiguous, the way that we expressed our intent was actually a bit ambiguous from our perspective. We asked about a license but we didn’t necessarily say driver’s license and of course, the voice interface and all of its wisdom decided to interpret that incorrectly and to ask us if we wanted to have something else, like getting a boating, fishing or hunting license. That’s totally out of the domain of what we’re looking for. By doing this kind of confirmation and using these prompts, we can allow for a voice interface to understand exactly what the user wants to do. In the exact same way that we can help a user, guide a user down a form to understand through help text. For example, what it is that they should be filling in, or, which links on the page, represent the items that they’re looking for, specifically.

What about modals? Well, this is a very very common thing that we find in web design. We have alerts, we have modals that we might be using jQuery for or React JS for, and these are usually things that display things like errors. For example, if I ask my askgeorgia.gov voice interface with some unclear mush of voice speech that makes absolutely no sense. Then askgeorgia.gov is going to really have to figure out a way to respond that mimics the ways that we respond to users who have done something that’s not quite what we expected on a website, and that means responding with a clear error message like, sorry, I didn’t understand. Ask me a question related to the state government of Georgia, that as you can see here, this is an example of how we can connect the idea of a modal, the idea of an error message or an alert over to the voice interface setting where we actually have a means of doing the same sorts of visual elements that we see on a website, in an oral form. In this case, we’re using a combination of prompts and responses to really make sure the user understands that we’ve come across an error, the response here of course is sorry didn’t understand, and the prompt that helps the user understand, well maybe you asked a question that was not within my realm of expertise, or maybe you said the statement for example, we’ll just restate the prompt which is asked me a question related to the state government of Georgia. Now as you can see here, this is a very interesting element within voice interfaces because a lot of the things that we work with in voice interfaces involve errors. Voice interfaces by large are very, very, very error-prone, which means that we face a lot more errors we face a lot more issues a lot more of these sorts of alert models or error models than we do on a typical website.

What about flows. So let’s move into now that we’ve talked about some of these elements like links and calls to action and modals and titles and header tags, let’s move into some of the events that occur within a website. Let’s talk about what makes up a call flow diagram. As I mentioned earlier, generally speaking, when you interact with the phone hotline you’re interacting with a call flow diagram you’re interacting with a series of flows and lines and arrows, here, we call them nodes and arrows, that allows for you to understand, oh now I want to make a reservation. Now I want to book a flight. Oh wait, now I want to choose my seats on that flight. Now I want to choose my meal on that flight. All of those represent decision states where the interface presents to you a prompt, for example, what kind of meal would you like? Do you have any dietary restrictions for that meal? and so on so forth. Just like if we were to choose those meals or reserve a flight or reserve a hotel room on a website, we would be clicking the link, responding to a modal, scrolling down the page to another section of the page.

So as you can see making decisions and moving between these elements, these events that occur on websites are very similar to the kinds of things that we need to do, the kinds of motions that we need to make between decision states within voice interfaces. For example, when we click on a link, and we go from one page to another page A to page B, that’s very similar to moving between decision states, and clicking on that link or following that call to action or scrolling down and focusing on another element in that form is an example of moving between those decision states, and you can see on the right side here that we can consider these decision states, these nodes, to be well, webpages, in the case of links, or calls to action, or they can be fields on a form that we’re trying to fill out.

Here’s an example, one of these decision states that we saw earlier, was where the askgeorgia.gov voice interface needed to prompt if they wanted to have related content. That’s the FAQ’s that fall underneath, right, not the what you should know section but those FAQ’s that fall deeper and deeper into the rabbit hole. Here we’ve got askgeorgia.gov that asks the question, “I’ve listed related information, would you like to hear it?” We’re moving from one decision state where the machine, the interface has just served some topic content, namely the driver’s license content the user responds with a confirmation “Yes”, and now the based on that response. The interface will move over into another decision state which is of course to prompt if there’s any related content that’s desired by the user.

Decision states represent actions that are performed by the voice interface, including those that are invisible to the user. Here’s an example of this. In one of the conventions I use and this is something that’s not necessarily common across every single voice interface designers toolkit but it is quite common in a lot of voice design is to put prompts on the left and responses on the right so you have a very clear delineation between your decision states, and how they operate. Well, in this case, what we’re seeing here is a prompt for a question, which is Welcome to Georgia gov, feel free to ask a question. This response by saying “how do I renew my license?” and what the machine does what the voice interface does hear, is to go ahead and forward that topic to a search tool, which is on the georgia.gov website, and this is invisible to the user. This is not something that the user sees. And chances are if you’re building a voice interface for your own website that has a search tool that you want to use within your voice interface, you’re going to have to create an invisible decision state or an invisible action that the voice interface has to perform in order for you to move on to the next step, because of course, prompting if the topic is correct. “Does this sound helpful? Getting a boating, fishing or hunting license”

There’s also recursive decision states, let’s say for example that you forgot to fill out a required field in a form, and you hit that submit button, but then an error form pops up and says Well, sorry, you need to fill out this in order to move forward. Here’s a feel free to fill it in. When you dismiss that or you actually resolve that error, you can move on to the next, let’s say step in the form or the next step or the next page in your wizard, and that’s very much like what I call recursive decision states in a voice interface. If you come across an error that’s really unfamiliar to the voice interface where you’re not really getting them a response that they can work with, they’re going to keep on looping over and over again to give them something they can work with, or you exit out altogether and go back to the very beginning.

So modals in this sense are very similar to recursive decision states where, for example, the very first thing that Askgeorgia.gov does is to say Welcome to Georgia gov, feel free to ask a question, I respond to some garbled muddled mumbling and ask for to Gov says “Well, sorry I didn’t understand, asked me a question related to the state government of Georgia”, that’s the same prompt gone over again. And what we’ve done here with this arrow is to move back once again to the same exact decision state that we were on just going right up right over again and looping through the same prompt over and over again and if I were to keep on responding in a way that is not understood by the voice interface that would continue to occur. And now finally of course I asked it in a way that the voice interface understands “how do I renew my driver’s license”, well passwords ago response. “Does this sound helpful driver’s licenses”, and that’s where of course we’ve moved on to forwarding that topic, over to the search tool.

One of the questions I often get as a voice interface designer who used to be a web designer as well, is of, what, what do we do with menus, because menus are so common and lists are so common and series and sequences are so common within a website. Voice menus are essential for identifying user intent for performing that process of intent identification, but the problem is that you just can’t present as many verbal options as visual menus can, and some of the tactics that people use are, for example, to do the single access key approach where you just present two options and that’s why they’re also called dichotomous keys. Or to present a limited menu of options that are very easy for the user to understand. For example, this is why, on a phone hotline, which uses a call flow diagram, you’re generally going to say something like “reservations” or “cancellation”, or something like that, as opposed to a very very long utterance or a very very long response to this sort of prompt. So voice menus are very crucial, but they really are much more limited in the realm of voice interfaces. But they also involve recursive decision states, many times because if you’re doing a sort of very complicated list, a very complicated menu for example where you’ve got potentially 10 or 15 search results in this case that have been returned by getting a boating, fishing or hunting license, like driver’s licenses, like C, like D, like E. You really have to once again iterate through those results and prompt the user, over and over again to understand what exactly they want. So not only are these recursive decision states really useful for error handling just like our modals are on our visual websites, they’re also very helpful to help identify the intent of the user by iterating through some of these lists and menus that we have to go through.

Here’s an example of how these two very, very different ideas within Web Design dovetail into a single structure within the voice interface design paradigm. So one of the things that I will do here is to share a little bit about what we did to convert these dialogues in the flows. As I mentioned earlier, multiple sample dialogues or multiple dialogues that represent all the conceivable conversations or most of the conceivable conversations because there’s absolutely no way to look at every single dozens of possibilities or account for all the hundreds of permutations of conversations you can have with a voice interface, but by and large, you want to write enough sample dialogues or enough voice interface dialogues or enough conversations that represent a typical full interaction flow that allow for you to create a flow diagram based on it.

What we’ve done here is we’ve started to create a rudimentary flow diagram based on some of the dialogue elements that we’ve got, like you’ve seen the onboarding up there with the prompt you see other prompts and responses that are sprinkled here throughout, and of course you see recursive behaviors that are drawn in here as well. The final decision flow diagram now of course I’m not going to go into the full detail of this because it is very, very long and there’s a lot of dialogues that are involved in the creation of this interface, but what we just did was to go through a lot of the dialogue elements that we discussed earlier, and look at how those can be mirrored within a voice interface.

For example, an initial prompt, that onboarding, that title, and that title element, that header element is really about introducing yourself to the user, and then you’re going to really prompt them for a question for that over to a search, you’ll get a prompt if that topic is correct, which is of course a confirmation modal, or some sort of message on a form or some other mechanism that allows for the user to respond, such as, of course, clicking on a link from a list of options in a menu, then of course we’re going to go down the rabbit hole further and further, and as you can see here, our call flow diagram here represents to a great extent, the same exact visual hierarchy that we see on a website.

Going back to what Chris Murray said earlier about screen readers. One of the big problems with screen readers is that the visual hierarchy has already settled, and of course there are many ways to use screen readers efficiently, but screen readers are really stuck, and trapped in what it is that the website visually provides in terms of how it lays out the page with voice interfaces you can be a lot more flexible. You can ignore certain areas of the page. You can ignore certain links or calls to action. You can ignore even the navbar altogether because of the fact that menus are much harder to implement. So in a call flow diagram, you really want to focus on the events that occur, what are the links that you click and how do you, you know, how do you fill out a form, how do you focus on other form element, how do you move using these arrows across decision states. And of course, with dialogues you want to focus on those elements, what are the equivalents to your prompts and responses, what are those links and calls to action and modals that you’re going to find within your dialogue as well.

I want to end here with a really quick set of ideas about what some of the new design problems are in voice, because unlike web design voice interface design encounters a series of very, very important issues. One of them of course, is that there are subtle problems on the web that become much more obvious issues in voice. Namely perceived identity and implicit biases about people, because, for better or worse, we actually personify and think of, and envision, and look at voice interfaces as real people. When you hear Alexa or Siri or Cortana speak, who is the person you picture, or hear speaking in your mind? Generally speaking, what we see or what we hear in our heads, is a cisgender, heterosexual white women with a general American dialect, but that doesn’t reflect the richness of human language that we have today. When was the last time for example that you interacted with somebody who spoke in AAVE African American Vernacular English, or who code switched between Spanish and English, because of their bilingualism, or who code switched between queer and straight passing modes of speech? Voice interfaces really need to represent the richness of human language and that’s one area that still remains for us to tackle. Websites, we don’t really necessarily personify them we don’t see them as human beings, unless of course, you can’t some of the strange examples out there, like Ask Jeeves. We don’t really look at websites as being human beings in their own right, but of course, voice interfaces do represent human beings and do represent these natural sounding voices that are very, very different from the kinds of voices in the fast clip at which streaming or speak, or the mechanical ways in which we interact with screen readers. One of the worries that I have, of course, is about one of the main points of the conversational singularity that will be occurring here in the future recording the futurist like Mark Curtis. The conversational singularity will make voice interfaces indistinguishable, indiscernible from natural human conversation. But I think as we’ve seen today. Websites can generally speaking fall into a really easy set of paradigms that might kind of look at the ideal way or the optimal way in which a user might interact with a website. Of course, ignoring all of the systems of oppression that users encounter when it comes to ableism or when it comes to accessibility or when it comes to, let’s say, the language that’s actually written into a website, but the conversational singularity claims that voice interfaces will eventually become indistinguishable from human conversation.

I think the question that we have to ask ourselves is, at what cost? And that’s a conversational singularity for home. If you think about for example the ways in which we interact with our websites, they’re very different. On a voice interface the ways in which we respond to questions the ways in which we interpret those questions are very much more along the lines of how we interact with real people on a daily basis. Not how we might click or hover or scroll or type on a website. Those are artificial interactions. What we’re doing with conversation and voice is a natural, organic, primordial, primeval, spontaneous interaction that gets at the very very root of what it means to be human.

Some key takeaways here, once again, voice interfaces are unique because they employ our natural instincts over acquired skills, they’re not like keyboards, they’re not like smartwatches, they’re not like smartphones, they’re not like video game controllers. They’re really about speech, and they’re really about how we have a natural-ish conversation, Or as natural conversations, we can have with each other as human beings and applying those same mechanisms and those same natural phenomena to voice interfaces, themselves. And voice requires oral analogs to these visual and tactile elements and events we’re not talking about links anymore, we’re not talking about calls to action or form fields we’re talking about prompts and intense and responses. We’re not just talking about clicking or scrolling we’re talking about moves, and arrows and branching and recursive behaviors, between various decision states within a call flow diagram. It’s a very, very different way to think about voice interface design in the context of web design because, in web design, we have a visual Canvas, we have a blank page we can put anything we want to on that page, draw some, you know, layout elements we can draw some text, we can have a really nice mock up in figma or an XD. But with voice interface design, we’re really stuck with these design artifacts that feel very rudimentary. That feel very limited. These dialogues that really are the full soul of the narrative makeup of our voice interface and the call flow diagrams which are really just journeys that our users traverse within those voice interfaces.

Here’s some questions to ask your team or your organization or yourself if you want to create a voice interface for your website.

- How would your website sound not look verbally communicate not visually behave. If it were transformed to a voice interface.

- How would your text be style and formatted?

- What dialogue elements dear links and calls to action and forums and buttons turned into what prompts they become and how do they identify intent or winnow down, that, that sense of what the user wants?

- what flow elements to your nav bars and sitemaps and menus and headings and footers turned into, are they know that branches are the recursive behaviors, how do you handle errors

- and do your users interactions with your website, reflect the dialogues you’ve written, are they similar to how your website really works.

Can you use or apply that same mental map that they’ve encountered on your website, potentially, to the same call flow diagrams that you’ve planned for your interface.

And of course, in voice, the subtle issues on the web become very obvious problems on the voice interface. this realm of voice interface design, and we have to really think about things in very very different ways, not just about how Web Elements transform into voice elements, but also how our web interfaces themselves transform into fully-fledged identities and fully-fledged people with our own biases, of course, against them.

Thank you very much and if you want more information about voice and voice usability and voice interfaces and a lot of what I spoke about today, check out my new book Voice Content and Usability at A Book Apart.

I’ll share some resources in the slides here, And thank you so much for having me today.

Preston So (he/him) is a product architect and strategist, digital experience futurist, innovation lead, designer and developer advocate, and author of Voice Content and Usability (A Book Apart, 2021), Gatsby: The Definitive Guide (O’Reilly, 2021), and Decoupled Drupal in Practice (Apress, 2018).

He has been a programmer since 1999, a web developer and designer since 2001, a creative professional since 2004, a CMS architect since 2007, and a voice designer since 2016.

A product leader at Oracle, Preston has led product, design, engineering, and innovation teams since 2015 at Acquia, Time Inc., and Gatsby. Preston is an editor at A List Apart, a columnist at CMSWire, and a contributor to Smashing Magazine and has delivered keynotes around the world in three languages. Preston is based in New York City, where he can often be found immersing himself in languages that are endangered or underserved.

preston.so • @prestonso • [email protected]

Resources

- “Personality for voice interfaces: Humanizing the most human of interfaces” (preston.so, June 22, 2021)

- “How to classify interactions for conversational interfaces” (preston.so, June 8, 2021)

- “Register, diglossia, and why it’s important to distinguish spoken from written conversational interfaces” (preston.so, June 2, 2021)

- “How we integrated Alexa with Drupal for Ask GeorgiaGov, the first voice interface for residents of Georgia” (preston.so, May 26, 2021)

- “Can voice assistants displace screen readers?” (preston.so, May 19, 2021)

- “Conversational maxims and the cooperative principle in voice interface design” (preston.so, May 11, 2021)

- “Voice interface design is about good writing, not just good design” (preston.so, April 16, 2020)

- “Affordance and wayfinding in voice interface design” (preston.so, March 3, 2020)

- “Building usable conversations” series (preston.so, January 18, 2019)

- “Usability testing for voice content” (A List Apart, April 9, 2020)

- “Ask GeorgiaGov: Answering Georgians’ questions with Alexa and Drupal” (VOICE Summit Newark, July 25, 2018)

- “Talk over text: Conversational interface design and usability” (Frontend United Utrecht, June 1, 2018)

0 Comments